COVID-19 Vaccine Misinformation Monitor: MENA

13th April 2021

By Ciarán O’Connor and Moustafa Ayad

Conspiracy theories about COVID-19 and the subsequent development and rollout of COVID-19 vaccines are rampant across Arabic-language Facebook pages and groups. In recent analysis, ISD has found connections to dominant COVID-19 vaccine misinformation narratives and influencers in the West, as well as region-specific tropes that are tied to the Middle East and North Africa’s geo-politics, and, in some instances, religious discourse on the apocalypse.

Facebook has taken steps to address misinformation around the pandemic and COVID-19 vaccines, but there remain limitations, particularly in its ability to moderate languages other than English. In the past, such gaps in non-English content moderation have been highlighted around issues such as the genocide committed by the Myanmar military against the Rohingya. Such limitations allow harmful and deceptive information, as detailed in the case of Arabic-language COVID-19 vaccine misinformation, to prosper on Facebook and have potentially far-reaching offline consequences.

This Dispatch is part of an ongoing series examining COVID-19 vaccine misinformation on Facebook in countries and regions across the world. This edition focuses on Arabic-language communities in the Middle East and North Africa (MENA) throughout January and February 2021. For more on the methodology, see here.

________________________________________________________________________

Key Findings

➜ Among a list of 18 Arabic-language COVID-19 conspiracy and misinformation Facebook pages analysed, the number of Facebook users that like these pages grew 42% between September 2020 and March 2021. As of 1 March, these Facebook pages had a combined 2,437,318 likes.

➜ More than half of Arabic-language COVID-19 misinformation Facebook pages analysed were launched during the height of the pandemic, and 56% of the pages were launched in 2020.

➜ In January and February 2021, a sample of 10 Arabic-language COVID-19 conspiracy and misinformation Facebook groups hosted 7,313 posts (photos, links, statuses and videos) that generated 239,756 interactions (reactions, comments and shares). These figures represent a 44% increase in the number of posts and 43% increase in interactions among these groups in six months.

➜ Arabic-language conspiracy hubs are masquerading as independent institutions, think tanks and research initiatives and are manipulating COVID-19 data. Read more about our analysis of one such disinformation hub, the Center for Reality and Historical Studies here.

➜ Arabic-language COVID-19 misinformation pages on Facebook regularly amplify COVID-19 misinformation coming from the West, using Arabic subtitling or voiceovers often unencumbered by moderation and fact-checking efforts. However, there are distinct themes and issues that Arabic-language COVID-19 misinformation communities use to frame vaccine and pandemic conspiracies, such as narratives about the coming apocalypse and antisemitic tropes.

Misinformation specifically about the vaccine

“Cytokine storm” claim spreads among Arabic language communities. Leading COVID-19 vaccine disinformation themes popular in the West, as highlighted in our analysis on Ireland and Canada, are now being shared among Arabic-language communities. This highlights how content, claims and conspiracies first seeded in the West are being modified and localised to broaden the reach of deceptive information to new audiences on Facebook.

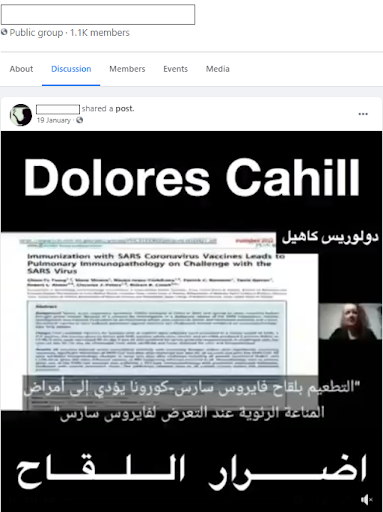

In mid-January, Arabic-language communities shared a video of Dolores Cahill, an Irish professor who has previously said that there is no need for a vaccine. The video features Cahill speaking, with added Arabic subtitles, promoting the baseless claim that mRNA vaccines (those produced by Pfizer and Moderna) will cause a “cytokine storm”, or an overreaction of the body’s immune system causing injury or death in recipients.

This claim is prevalent among COVID-19 conspiracy theorists and has been the subject of fact checks from Reuters, Health Feedback and Lead Stories, who have all stated that it is false or lacks evidence.

Fig 1: Screenshot of video featuring Dolores Cahill promoting the “cytokine storm” claim with added Arabic subtitles.

This version of the video with Arabic subtitles was first posted on a Facebook profile and subsequently shared at least 67 times. In the post, the Facebook user repeats the claim made by Cahill in the video and states that mRNA vaccines will cause injuries and death in recipients. None of these posts featuring this version of the video include a fact check or information labelling it as false or lacking evidence, highlighting a concerning gap in Facebook’s efforts to tackle harmful COVID-19 misinformation.

Claim of Pfizer CEO ‘refusing’ vaccine shared 132 times. A Facebook page that promotes skepticism and distrust of doctors and the pharmaceutical industry pushed a misleading claim that aims to reduce trust and confidence in Pfizer’s vaccine and other COVID-19 vaccines. The page, whose name translates to “What Doctors Don’t Tell You”, made use of a false claim that was first shared in the US in December, demonstrating how rapidly COVID-19 misinformation moves across borders and languages.

The page published a short video featuring comments from Pfizer CEO Albert Bourla claiming he “refused” to take the vaccine, using this to generate suspicion among followers. This is false. In December Bourla said he had not yet received the vaccine as he did not want to “cut the line” ahead of frontline workers. The same claim alleging Bourla did not plan to take the vaccine spread in the West and USA Today devoted an article to debunking the claim.

The Arabic-language Facebook post, published on a page with over 55,600 likes, has been liked over 265 times and shared over 140 times to date and features no fact-checking information. The video was also published on a backup Facebook page linked to the main page and liked 138 times and shared 76 times. This post, published on a page named “What Doctors Don’t Tell You 2” and operating in the same manner as the primary Facebook page, also features no fact-checking information debunking this claim.

Misinformation about the vaccine rollout

Videos of women shaking after purportedly taking vaccines shared among Arabic-language communities. Videos showing people shaking or convulsing, supposedly after receiving a COVID-19 vaccine, are typically shared online to foster distrust and fear in the vaccines, with footage that was taken in the US being shared widely among the Arabic-language communities analysed in this report. Two videos in particular went viral on social media platforms in the West in January and were the subject of numerous fact-checks and reports, as seen in Wired and Politifact.

The nature of the journey of these videos across Arabic-language communities online highlights various key takeaways about COVID-19 conspiracies, the role of Facebook in the spread of disinformation, and the prevalence of fact checks outside the West. One of the videos was shared by a low level COVID-19 conspiracy Facebook page on 13 January and now features an Arabic-language fact check stating that the footage is “missing context” and the video “could mislead people”.

Yet ISD identified a different user who included both of these videos in one post, as well as a separate image claiming that Nancy Pelosi was paralysed in her face after receiving the vaccine. The post was shared 21 times and in at least two Arabic-language conspiracy Facebook groups, which have 17,300 and 2,800 members respectively. All posts sharing these videos were used to support efforts to dissuade people from getting the vaccine but do not include any fact checks or similar information noting that these posts are “missing context”.

Unverified claims about vaccine injury and death are common. One Facebook group in our sample, with over 1,100 members and the words “corona” and “conspiracy” in its title, published numerous posts that claim vaccines are not safe and have killed hundreds of people in the US and UK.

One Facebook post claimed the US Center for Disease Control had covered up the deaths of 1,170 people who died following the vaccine, despite the CDC publishing these figures on their site. The post claimed these people died as a result of the vaccine. These deaths, which occurred between December 2020 and February 2021, represent just 0.003% of all those vaccinated during this period and “no evidence suggests a link” between the vaccine and their deaths, Bloomberg reported.

Similarly, a Facebook post claimed 107 people suffered “sudden death” after receiving the Pfizer vaccine in the UK. Once more, the figures of actual deaths are true but, according to the Wall Street Journal, the British media regulator does not believe these were linked to the vaccine. COVID-19 conspiracy communities regularly promote claims like this, using alarmist language to provoke strong reactions and foster fear and hostility towards vaccines.

Misinformation about the societal impact of a vaccine

“Get ready for the Hunger Games”: Apocalypse conspiracies take hold. Arabic-language conspiracy communities on Facebook have published and promoted conspiracies claiming COVID-19 and the race for vaccines will lead to civic unrest and potential societal collapse in the form of an apocalypse. At the core of these conspiracies is the “antichrist” embodied by Microsoft founder Bill Gates. Three of the largest of these pages, “Book Knowledge”, “Uncovering Secrets” and the “End of the World” have a combined 1,231,161 followers.

Combined, these pages account for more than 50% of all of the followers of the 18 pages tracked by ISD researchers during this period. Their appeal relies on a series of provocative clickbait-style video titles and captions, as well as over-the-top vaudevillian video thumbnails. Bill Gates is not only dubbed the antichrist by these pages, but also an agent of the masonic and zionist cabal and the coming of a “New World Order”.

Fig 2: Thumbnail for a video, still live on Facebook and YouTube, promoting apocalypse conspiracies featuring Bill Gates

One Facebook page, which has over 134,000 likes, was a serial promoter of these claims. In one post, the page published a video with dramatic scenes showing military generals preparing for conflict, intercut with images of crowded hospital wards and graphics about “COVID-19 vaccine trials”. The post was shared 444 times. Other videos on the page promote claims about how Bill Gates supposedly stands to capitalise from the current crisis.

One post featured a video that shared details about Gates’ “horror plan” for 2021, alleging that Gates wishes to depopulate the planet and stands to make millions of dollars from COVID-19 vaccine production. This claim has circulated since the beginning of the pandemic but there is no evidence that Gates or his foundation will profit from a coronavirus vaccine, according to fact checks by Politifact.

Another claim published by this Facebook page alleges that Gates is engineering global uncertainty around COVID-19 and the page warned its followers to “get ready for the Hunger Games”. These two videos have been shared over 550 times on Facebook combined.

In February, Facebook expanded its policies to remove all content with vaccine-related misinformation, moving beyond just coronavirus-related claims. However, as demonstrated in the examples here, the platform is still failing to enforce its policies on misinformation that push false claims about COVID-19 vaccines, let alone the broader spectrum of health disinformation about vaccines.

It is clear that Facebook has failed to effectively tackle viral COVID-19 misinformation among Arabic-language communities on its platform. Many claims that have been flagged for fact checks in the West have escaped such scrutiny among Arabic-language Facebook communities. The report suggests an imbalance in the effectiveness of content moderation efforts relating to health disinformation in Arabic compared to English. This tracks with previous research conducted by ISD on parallel issues of content moderation, including on terrorist-related content on Facebook.

What is clear from this analysis and findings is that the same conspiracies being touted as harmful to audiences in the West are untouched and flourishing in other geographies.

Ciarán O’Connor is an Analyst on ISD’s Digital Analysis Unit with expertise on the far-right and disinformation environment online and open-source research methodologies. Before joining ISD, Ciaran worked with Storyful news agency. He has an MSc in Political Communication Science from the University of Amsterdam and is currently learning Dutch.

Moustafa Ayad is the current Executive Director for Africa, the Middle East and Asia at ISD, overseeing more than 20 programs globally. He has more than a decades’ worth of experience designing, developing and deploying multi-faceted P/CVE, elections and gender projects in conflict and post-conflict environments across the Middle East and Africa.