Digital Dispatches

April 1, 2024

ISD UK, ISD-US

Foreign Information Manipulation and Interference, Terrorism and Extremism

Pro-CCP Spamouflage campaign experiments with new tactics targeting the US

1 April 2024

By: Elise Thomas

The Spamouflage network is a long-running and widespread but largely ineffective operation, traditionally pushing pro-CCP narratives. However, there is evidence that operators are now experimenting with new ways to increase their influence.

One tactic, which ISD has called ‘MAGAflage’, involves accounts posing as right-wing Americans and exploiting domestic divisions. Although this strategy appears to be nascent, it has the potential to make Spamouflage significantly more effective.

The Spamouflage campaign has become infamous amongst researchers for both its dogged persistence and its lack of significant innovation, despite the apparent futility of its operations. Since at least 2017 it has used thousands of accounts to spam low quality and uncompelling content across more than 50 websites, forums and social media platforms, receiving little or no engagement from real users. Even the adoption of new technology, such as generative AI to create fake movie posters, has had little impact.

However, recent investigations into Spamouflage’s targeting of the conflict in Gaza and the US elections uncovered small but concerning tactical evolutions in Spamouflage activity on X. The accounts are using real videos, images and viral posts targeting US culture war issues, primarily from a right-wing perspective. This includes LGBTQ+ issues, immigration, racism, guns, drugs and crime. For the first time we have also found Spamouflage accounts posing convincingly as Americans.

Although the approach to some degree resembles tactics used by Russian state actors, there is no indication of any direct coordination (although this cannot be ruled out). It is possible that the Spamouflage operators, like many influence campaigns before them, have simply concluded that amplifying real, existing divisions using real, existing content is the cheapest, easiest and most effective route to deepen political and social divides in the US.

Although only a handful of accounts have effected this change and it has not been universally successful, it has already generated engagement from real US users. The vast scale of Spamouflage has previously been offset by its ineffectual tactics and uncompelling content; if the operators find a strategy which works, potentially augmented by generative AI, it could start to become a real problem.

This is particularly concerning in light of the erosion in the capacity of major platforms to detect and respond to sophisticated influence operations, after key Trust and Safety teams have been dismantled or substantially reduced over the past year.

The three examples below illustrate the incremental changes in Spamouflage’s content since 2017. In the early years, most posts consisted of JPGs which looked as though they had been put together in Microsoft Paint or something similar. These featured several paragraphs in Mandarin and usually a couple of images and would be posted alongside Mandarin or English text. Around mid-2020 their repertoire began to include videos featuring automated voiceovers, again in a blend of English and Mandarin. As of at least 2023 they also began to incorporate AI generated images to illustrate their narratives. Notably, none of these appear to have been successful at generating significant organic engagement from real users.

The MAGAflage accounts

ISD has identified four accounts linked to Spamouflage which are posing, convincingly, as supporters of Donald Trump and the MAGA movement, and a fifth which is posing as Greek but engaging with American political topics. Although all have posted significant quantities of Spamouflage content and narratives supporting the Chinese Communist Party, they are not being artificially boosted by the rest of the Spamouflage network.

Instead, they are building authentic pro-Trump audiences, including through MAGA community ‘follow trains’ in which social media users agree to follow one another and so boost each other’s follower count. They have also posted asking for more followers, receiving hundreds of apparently organic likes and replies.

One of the accounts, @WubbaLubbaDub18 began in 2020 as a standard Spamouflage account. It was engaged mostly in boosting official CCP accounts and pro-CCP narratives such as smearing Hong Kong’s pro-democracy protesters in Mandarin. The account fell silent between April 2022 and May 2023. When it resumed it was posting in English and sharing AI-generated content to illustrate narratives, which is increasingly common across the network (for more on this see this previous Dispatch on Spamouflage and the US 2024 elections). It is possible that the account may have changed its name, profile picture and bio around this time.

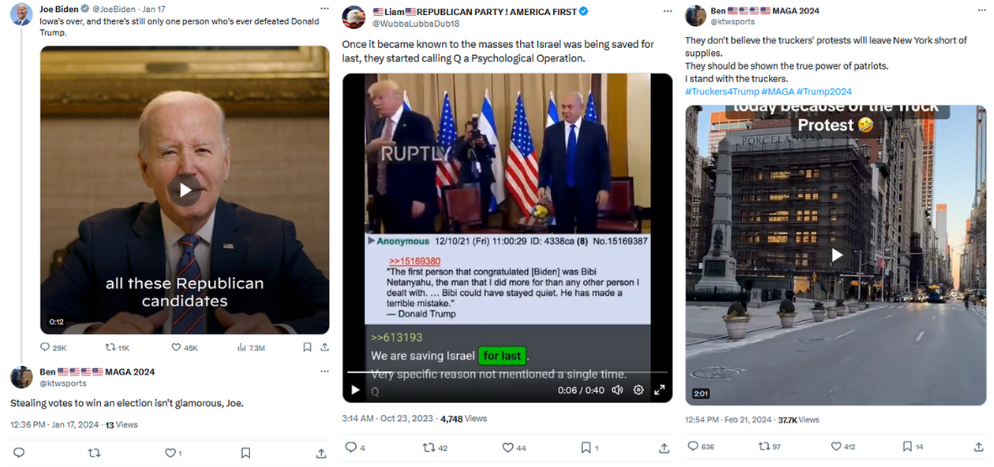

During this period the account frequently posted Spamouflage’s AI-generated ‘movie posters’, as discussed above, as well as posting anti-Biden and pro-Trump texts in English. Once on 13 May the account operator appears to have forgotten to translate their content, instead responding in Mandarin to a tweet from the official Trump Campaign account.

Initially its posts generated no engagement, authentic or artificial. However, in mid-May 2023 it began engaging with genuine Trump supporters. By the end of May, the account’s posts were generating double-digit engagements and it has continued to generate consistent double or triple-digit engagements on most of its posts until at least February 2024.

At some point during this period, the account subscribed to X’s Premium service, and presumably is benefiting from features including an algorithmic boost on its replies. Numerous reports have identified abuse of the X Premium service by state-linked influence operations, terrorist organisations and by garden-variety scammers and fraudsters. The extremely deep cuts made to Trust and Safety teams on the platform in 2023 are likely to be a contributing factor in the failure to detect and prevent these kinds of things from occurring.

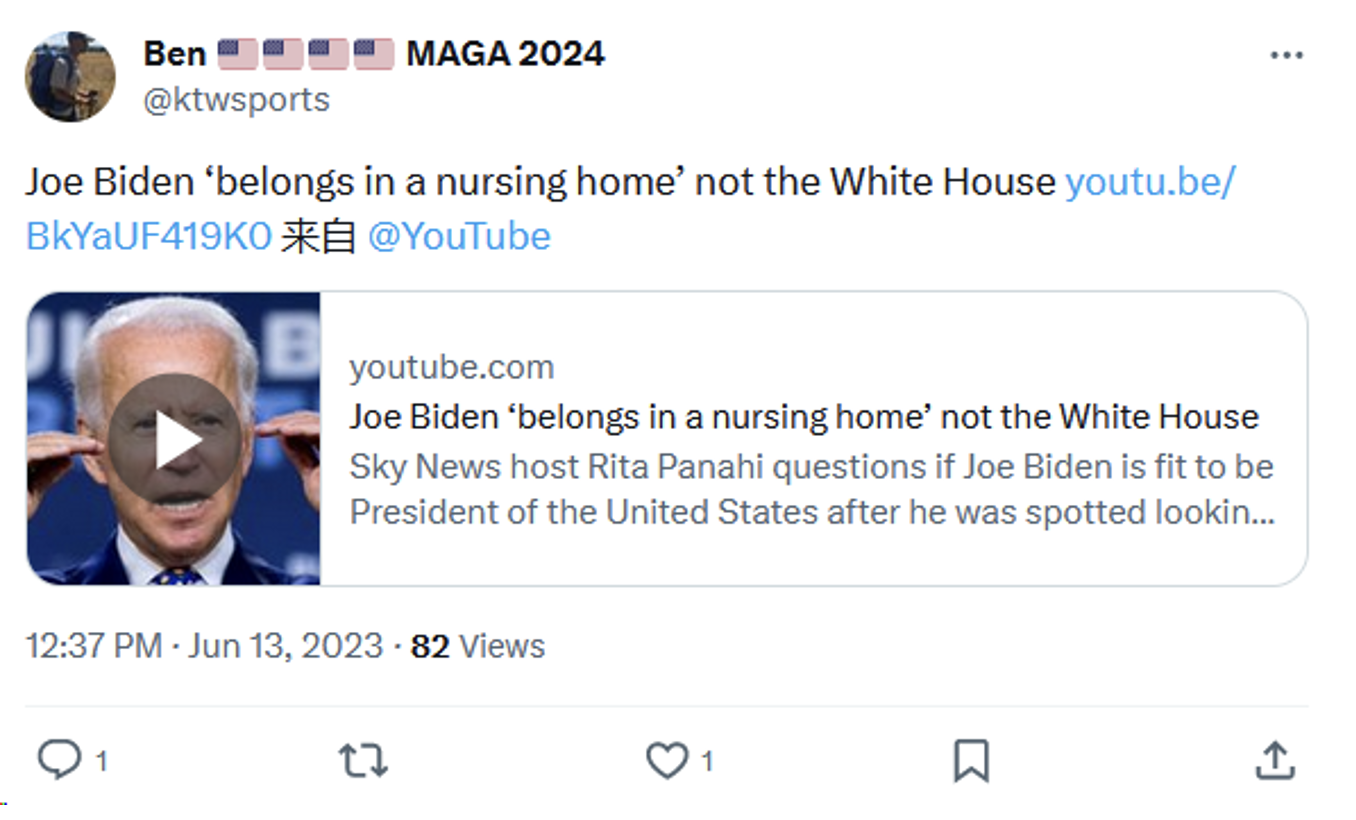

A second MAGAflage account, @ktwsports, has been identified as part of the network because it has posted multiple word-for-word posts and images from known Spamouflage accounts. It was created in 2010 but was either inactive or earlier content has been deleted. The earliest currently available post was on 18 April 2023, when the account began amplifying posts implying that politicians, in particular Joe Biden, are paedophiles, linked to Jeffrey Epstein, and potentially Satanic.

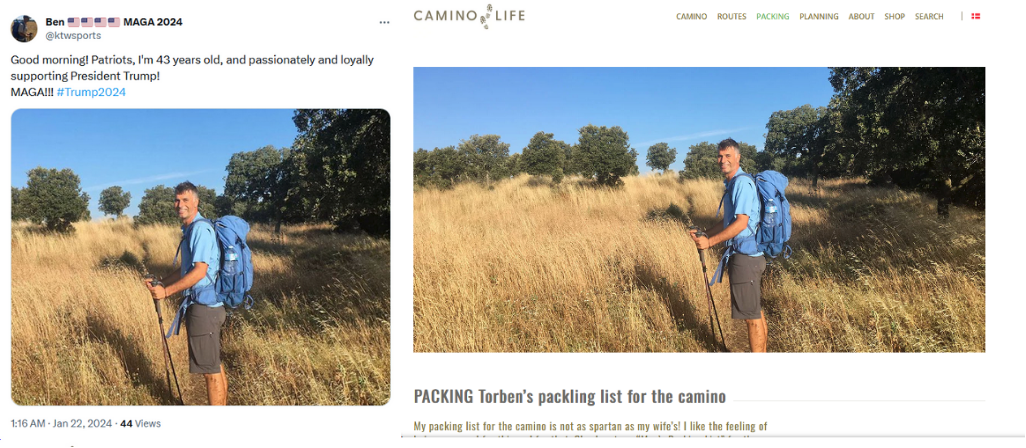

The account has been working to build a persona as an American man living in Los Angeles. On 22 January it posted a picture of a man and the caption “Good morning! Patriots, I’m 43 years old, and passionately and loyally supporting President Trump!” Both this image and the profile picture were taken from a travel blog belonging to a Danish man whose other content gives no indication of support for Donald Trump.

Based on the occasional grammatical and spelling errors, the content appears to be being written manually rather than being generated using AI tools. Other slip-ups include a YouTube video being shared using a browser set to Mandarin, resulting in the word ‘from’ appearing in Mandarin in the post.

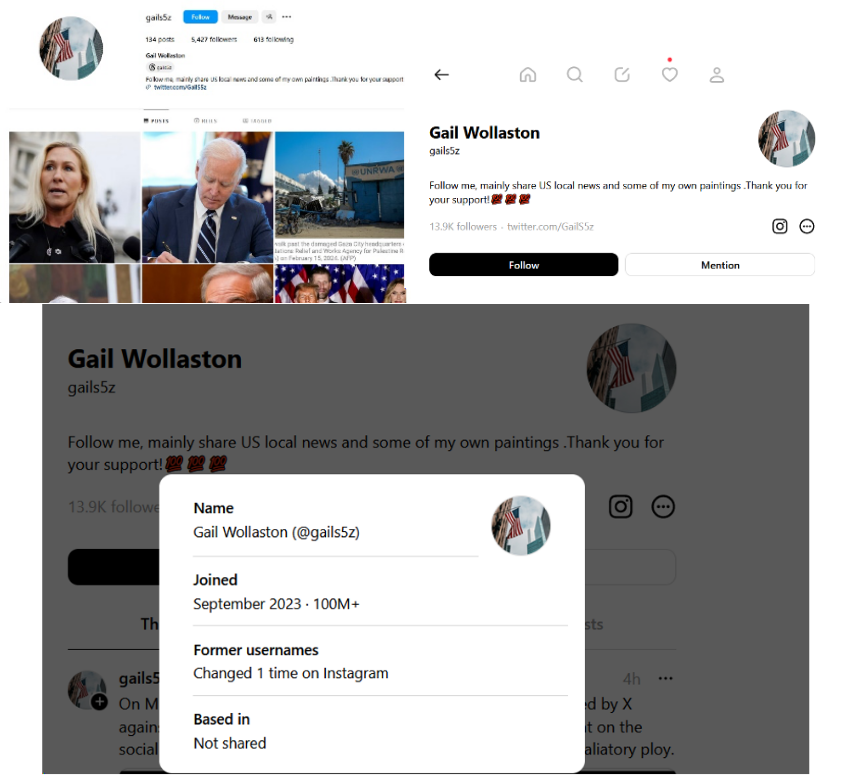

At least two other accounts have also made the switch from standard Spamouflage activity to this more tailored and convincing pro-MAGA content. One, @GailS5z, was created in 2016 but its earliest remaining activity begins on 28 February 2023.

It posted predominately in Mandarin sharing Spamouflage content until 16 April 2023. The account then went silent for several weeks, returning on 8 May posting in English. It then posted Spamouflage ‘movie posters’ alongside food and travel content, usually Chinese dishes or regions of China. As of March 2024, it has moved to a similar content strategy to @ktwsports and @WubbaLubbaDub18, posting videos or images along with commentary, usually with an anti-Biden and pro-Trump framing.

So far, this account appears to have been less successful than @WubbaLubbaDub18 in garnering a large authentic audience. As of late March @WubbaLubbaDub18 and @GailS5z have begun retweeting some of each other’s content, perhaps in an effort to boost @GailS5z’s audience.

Intriguingly, linked accounts for @GailS5z have been created on Instagram and Threads. In 2021 Graphika observed at least one multi-platform Spamouflage persona presenting as a Chinese woman, but as far as ISD is aware, this is one of the first documented efforts by Spamouflage to create a consistent multi-platform American persona.

All of the MAGAflage accounts promote pro-Trump, anti-Biden, anti-liberal and anti-’woke’ narratives, picking up on culture war issues and the outrage du jour in US right-wing information spaces. This includes dog-whistling at or overtly promoting conspiracy theories, such as those implying election fraud in 2020 or the potential for interference in 2024, and references to QAnon. Some of the content is original, but some is copied from viral tweets by large non-Spamouflage accounts.

In an interesting exchange on Threads, @GailS5z appears to have originally posted a claim that Leticia James and Arthur Engoron were seeking to “cheat” Trump in the 2024 election. After pushback from multiple, apparently authentic Threads users, the account appears to have edited the original post to much more neutral language. In a reply to one of its critics, the account wrote, “I’m just saying that someone did something to manipulate our election.” This comment is intriguing because it more or less spells out what message the account is seeking to promote through its activity.

In a truly remarkable example of how successful this MAGAflage approach can be, on 18 February 2024 the @ktwsports account shared a video from Russian state media organisation, RT. The clip and accompanying text alleged that Biden and the CIA had sent a neo-Nazi leader to fight in Ukraine with the Azov Battalion; records show the man was in fact in Florida and under active monitoring as part of his parole during the time he claims to have been fighting in Ukraine.

The post was by infamous US conspiracy theorist Alex Jones, with more than 3200 shares, 7100 likes and 365,500 views as of 4 March. The original @ktwsports post had also been shared 567 times, liked 921 times and seen by more than 416,100 users.

The case of the Huawei Mate 60 Pro phone is an example of how this approach has the potential to be more effective at spreading pro-CCP narratives among real Trump supporters than the usual Spamouflage tactics.

In September 2023 Spamouflage, along with other parts of the CCP propaganda apparatus, began running a narrative that Huawei’s chip innovations were symbolic of a Chinese victory over US tech sanctions intended to hinder China’s progress.

As with almost all of Spamouflage’s content, this appeared to generate little if any engagement from accounts outside the Spamouflage network. However, two posts on the topic from a MAGAflage account, which frame it as a failure of the Biden administration, generated hundreds of seemingly genuine engagements and 16,000 to 20,000 views respectively.

Another striking example of how these accounts can be used to promote pro-CCP narratives about US-China tech policy issues cropped up in late March 2024, as the US government debated passing legislation which would force Chinese company ByteDance to divest its ownership of TikTok or face a potential ban on TikTok in US-owned app stores. @GailS5z, @ktwsports and another evolved Spamouflage account, @d_anastasiadis,[1] have both posted about the TikTok ban, with the former calling it ‘true authoritarianism’ and the latter suggesting that Israel is the true sinister party behind the ban. @d_anastasiadis also appears to have had an associated TikTok account created on 20 March 2024.

It is possible that this handful of accounts are the only ones of their kind and that this is a completely isolated experiment which will go no further. But it is also possible that this is not the case, especially given Spamouflage’s usual pattern of operating at enormous scale. Newly created or repurposed accounts without a history of previous Spamouflage activity engaging in this behaviour would be very difficult for external researchers to detect. There may also be similar accounts active on the other side of politics supporting Biden, although no evidence of this has yet been found.

The ‘fo’ accounts

A less successful attempt for Spamouflage accounts to enter the ‘culture wars’, many (but not all) of these accounts have handles consisting of ‘fo’ and then eight digits – ‘fo’ or ‘互fo’ is a reference to follow-back, meaning that the account will follow back any account which follows it.

Most of the non-Spamouflage content originates from three accounts, with one in particular being the most prolific. This is then amplified by a circle of accounts which mostly just repost content, a fairly standard structure for the operation.

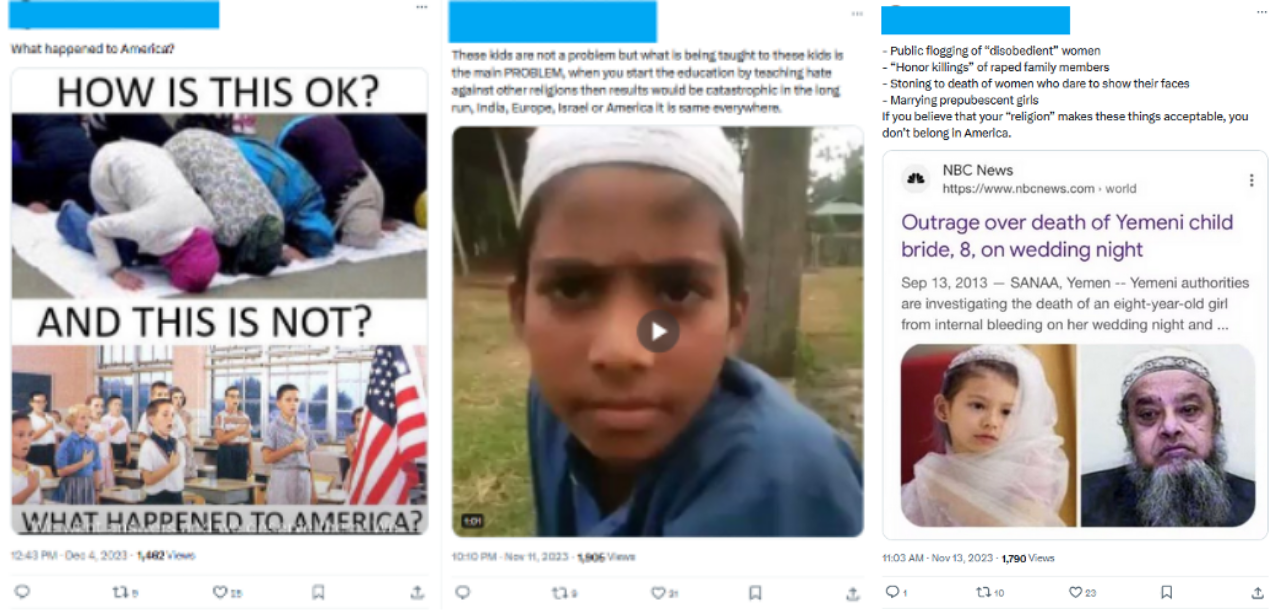

The three primary accounts shifted focus radically in summer 2023, engaging in aggressive and vitriolic posts about topics including antisemitism, sex education, drag queens, drugs and gun crime. The content generally comes from a perspective of intending to chime with mainstream right-wing audiences except on the issue of Israel and the conflict in Gaza, where they have adopted a consistently pro-Palestinian and anti-Israel stance.

There was also a striking tonal shift from previous content which, while highly critical of the US, was formal and almost old-fashioned or quaint. The language used in these newer posts is far harsher and sometimes includes profanity.

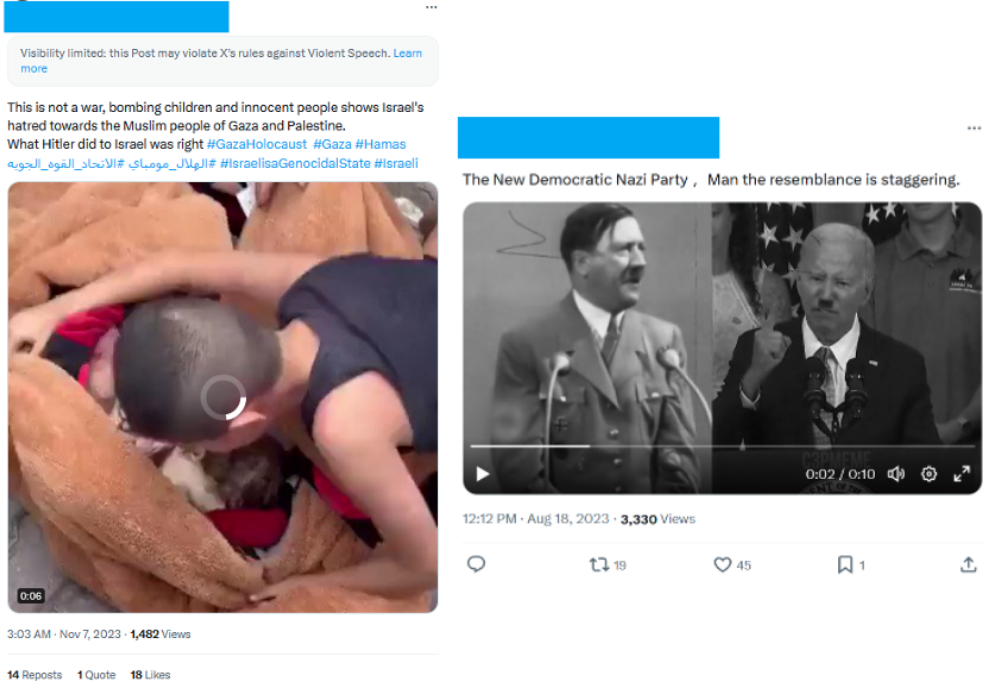

Some of the comments are shockingly extreme. In one example, the account posted a genuine video of what appears to be a grieving Palestinian child with the dead body of his baby sister. The text includes the sentence “What Hitler did to Israel was right”, presumably intended as a (historically garbled) reference to the Holocaust. In another case, the same account posted a video comparing Biden and the Democrats to Hitler and the Nazis and shared a meme (which does not appear to have been created by Spamouflage) about how America will be “Zio free.”

The accounts have also posted multiple very graphic images of dead and severely injured Palestinian children. These posts tend to be both anti-US and anti-Israel, although the examples below (WARNING: GRAPHIC CONTENT) interestingly appear to be trying to stoke resentment amongst Americans against Israel.

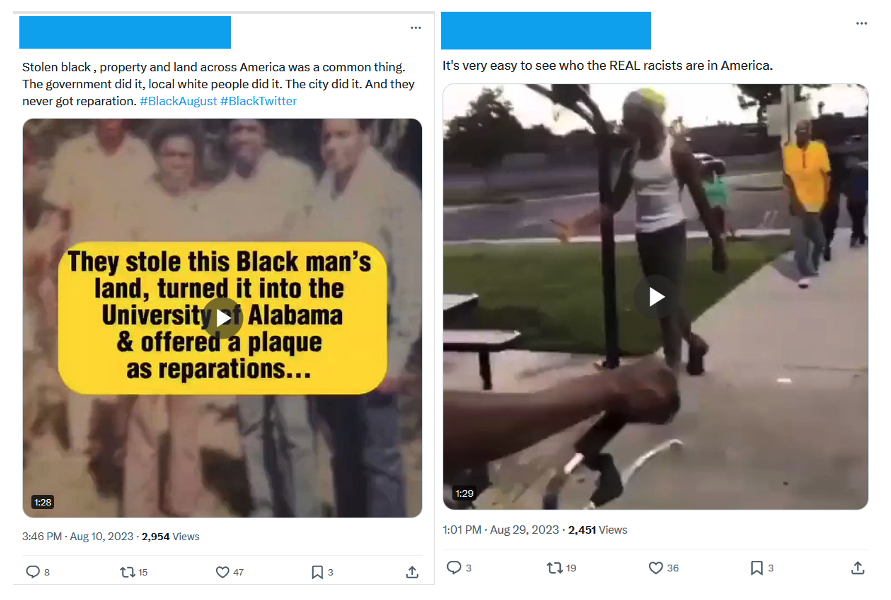

Somewhat confusingly, even as the ‘fo’ accounts support Palestine, they have also posted numerous Islamophobic posts apparently aimed at American audiences. They have also presented similarly conflicting content supporting reparations for Black Americans and asserting that white people are the true victims of racism inflicted by black people.

LGBTQ+ people and drag performers have also been a topic for the ‘fo’ accounts. This includes belatedly jumping on the bandwagon of the controversy over the sex ed book Gender Queer and dog-whistling about drag queens, making what appears to be a pro-Trump comparison between Melania Trump and Jill Biden.

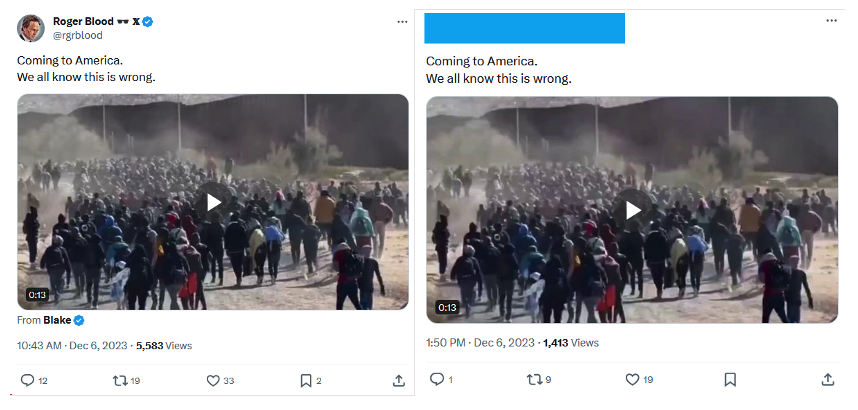

While some posts are original, others are copied from viral posts by larger non-Spamouflage accounts. For example, a post on 8 January 2024 from a ‘fo’ account about immigration was copied from viral post made hours earlier by the account End Wokeness, with the hashtags #America and #AmericanFiction added. On 6 December, a similar post was copied from user @rgrblood. This suggests that the campaign operators may have identified topics of interest and are scanning for real viral content on those topics to copy.

Unlike MAGAflage, as of early February 2024, the ‘fo’ accounts do not appear to be succeeding in breaking out of the Spamouflage bubble. Despite a small number of seemingly organic comments on some posts, engagement is overwhelmingly from what appear to be other Spamouflage accounts.

Conclusion

The accounts examined in this Dispatch represent two small-scale but evolving strategies for the Spamouflage campaign. It is important not to overstate their scale or impact at this stage.

However, these are nonetheless notable developments which will be important to monitor. A particular concern is the possibility that there either already are – or that in the future there will be – many more ‘MAGAflage’ style accounts (or similar accounts supporting the Biden campaign) which evade the usual methods for detecting Spamouflage activity and are successful in generating real engagement.

Endnotes

[1] The @d_anastasiadis account presents itself as a Greek social media influencer, but posts regularly about US political issues and the war in Gaza. It appears that it initially may have belonged to an authentic Greek user, until April 2021 when it abruptly shifted from posting in Greek to posting in Mandarin. There is a long history of Spamouflage using hacked and stolen accounts, in some cases potentially purchased from account brokers. The account behaved like a standard Spamouflage account in both English and Mandarin until May 2022 when it began posting entirely in English. This account is not presenting as a Trump or MAGA supporter, but it is actively engaging on US political topics and in particular the war in Gaza from a pro-Palestinian and anti-Israel perspective, and has engaged with both @WubbaLubbaDub18 and @GailS5z.