Why are Online Book Retailers Recommending Anti-Vax Content to Buyers?

7th October 2021

By Elise Thomas

In April, ISD published a report which found that Amazon’s algorithms were helping to propel users towards literature promoting extremism and conspiracy theories. This included recommending anti-vaccination books as the top results to users who searched for basic terms such as “vaccination.”

As of September, this problem continues to persist on Amazon. While Amazon’s market dominance arguably makes it the most concerning example, further research conducted by ISD highlights that this is also a wider problem affecting many online book retailers.

_________________________________________________________________________

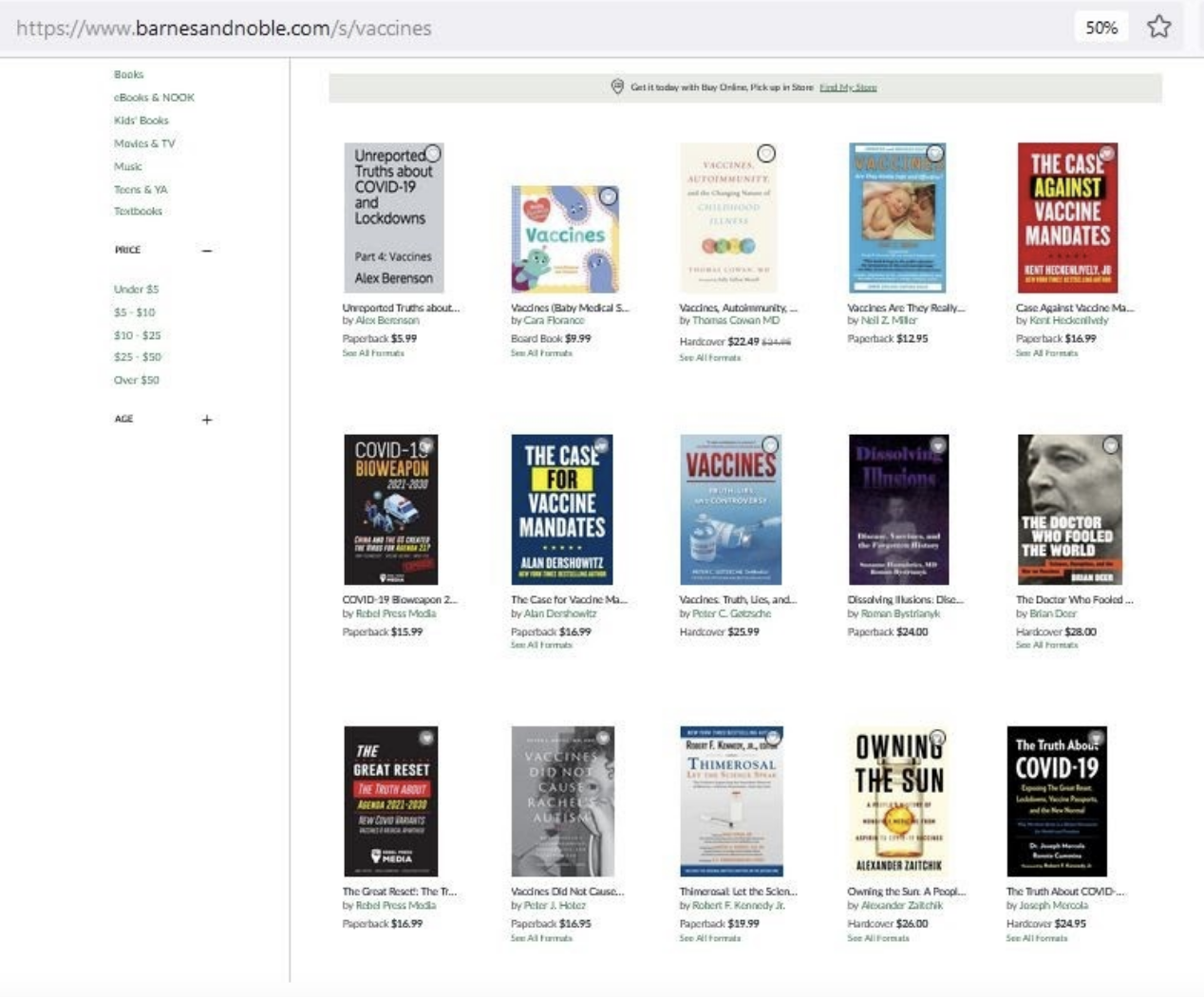

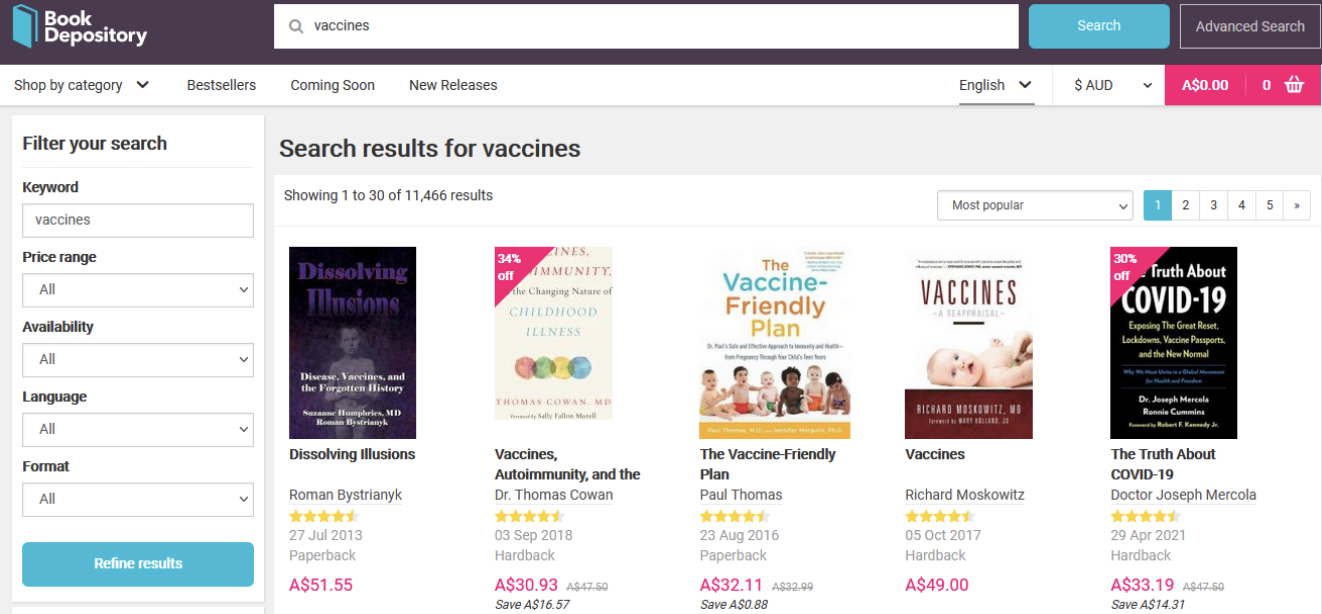

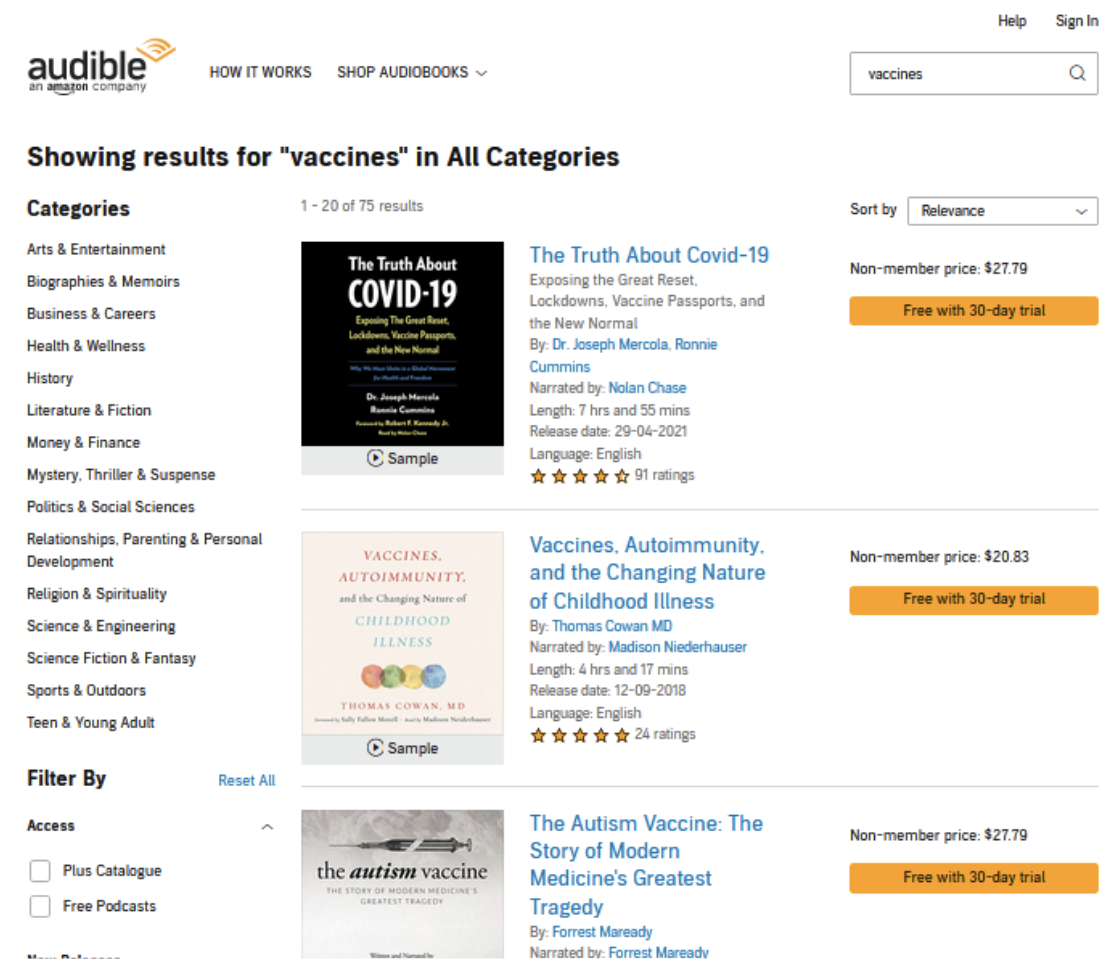

ISD’s research has found that anti-vaccination literature is being pushed to the top of search results for basic search terms relating to vaccines, beating out evidence-based information about vaccines. A search for the term “vaccines” at the online book retailers Barnes and Noble, Book Depository, Audible, Thrift Books and Google Play returned multiple anti-vaccination and conspiracy books as top results.

Barnes and Noble: A search for “vaccines” on Barnes and Noble’s online site yielded multiple anti-vaccination and COVID-19 conspiracy theory books as top results.

Book Depository: A search for “vaccines” on Book Depository returned similar results, which included offering a discount on The Truth About COVID-19: Exposing The Great Reset, Lockdowns, Vaccine Passports, and the New Normal, a COVID-19 conspiracy book written by Dr Joseph Mercola, who is a high profile figure in conspiracy circles. Researchers have identified Mercola as “a chief spreader of coronavirus misinformation online”, and he was recently banned from Youtube for preaching anti-vaccination conspiracy theories.

For each of these retailers, such problematic results likely stem from the same dynamic: people who are against the idea of vaccination will generally be more interested in buying books about vaccines than those who support vaccination.

Algorithms which are designed to recommend books based on their popularity therefore learn that anti-vaccine books are more likely to sell, and so they rank those items at the top of the search results for other potential buyers to see. This happens automatically, and in some cases the booksellers themselves may be unaware of the role their algorithms are playing in actively promoting this literature to their customers.

This is the fundamentally same problem which has confronted social media platforms time and time again, as conspiracy theories and misinformation often generate a high level of engagement from users. Algorithms which are designed to optimise for engagement – whether that engagement be clicking a like button or closing a book sale – respond by pushing that content in front of other users, with the outcome being that false or misleading information and dangerous beliefs are amplified to new audiences.

Audible: Mercola’s book was also the top result for “vaccines” on Audible, an audio-book platform owned by Amazon.

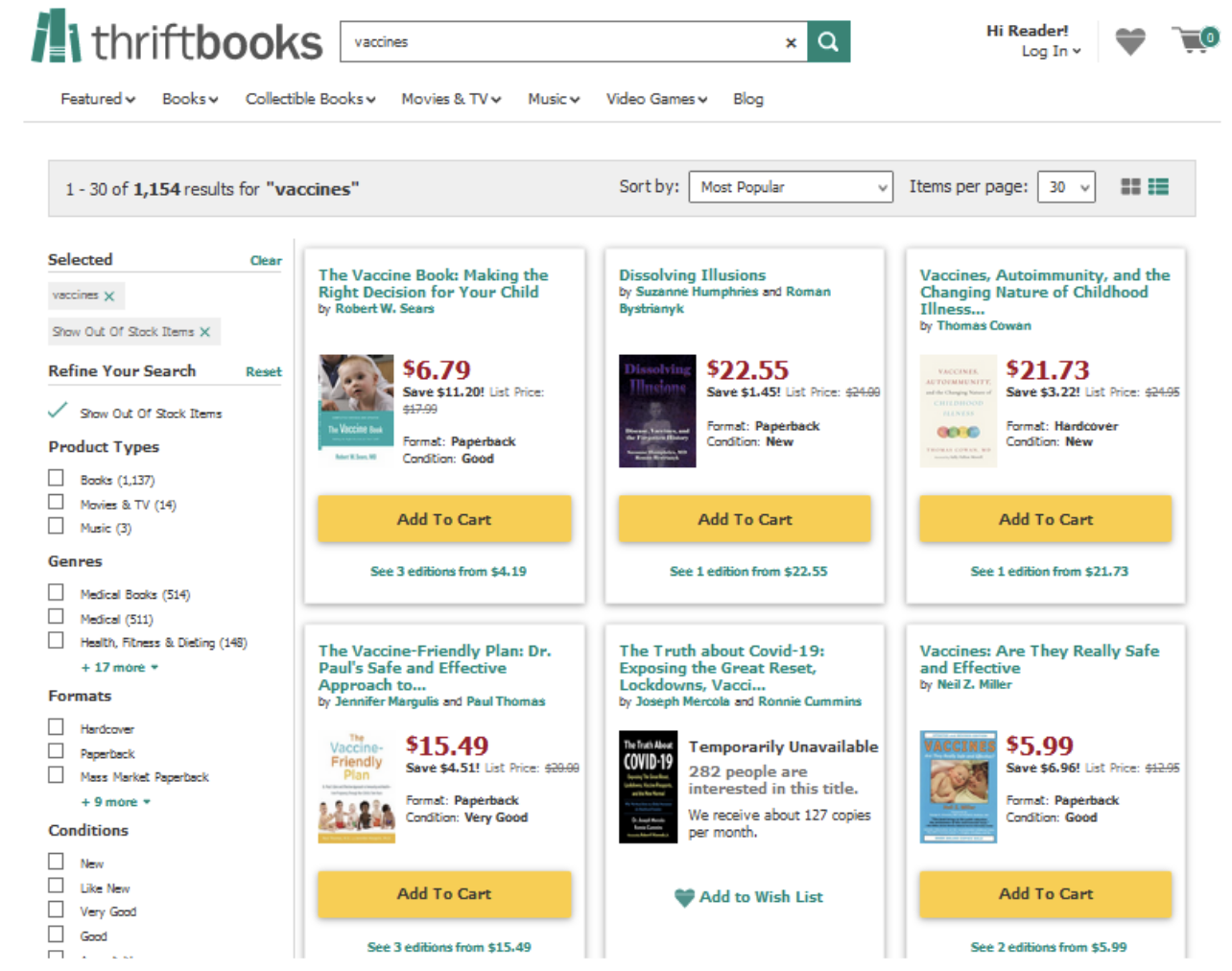

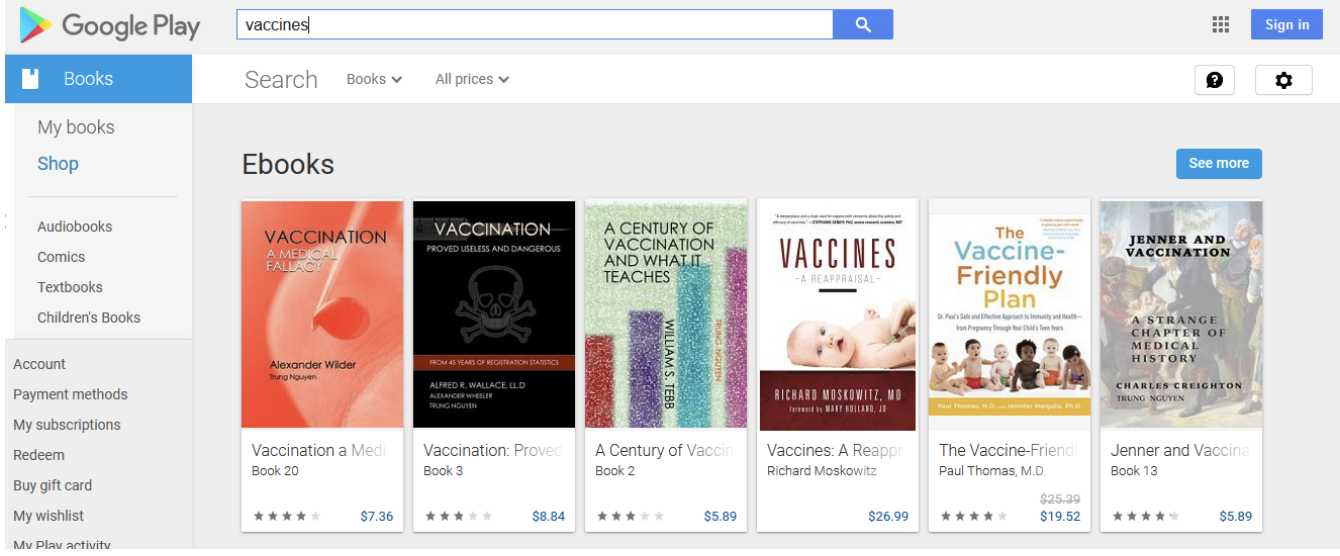

Thrift Books: Meanwhile, on Thrift Books, a search for “vaccines” revealed that Mercola’s book is currently sold out due to high demand – however, a number of other anti-vaccination books were still amongst the top results to choose from.

Google Play: On Google Play’s Ebooks section, Mercola’s book was not amongst the top results for “vaccines”. Instead, the top two search results were Vaccination: A Medical Fallacy and Vaccination: Proved Useless and Dangerous.

Social media companies are rightly coming under ever greater scrutiny about the role their algorithms play in spreading, amplifying and monetising content which can lead to harm – and certainly, they are far from finding a solution to the problem. However, they have found some ways to help mitigate against it, including more active content moderation and modifying the operation of their algorithms on particular topics.

When it comes to content moderation, there is a legitimate debate to be had around freedom of speech and freedom of publication. Booksellers may have different views on whether or not these books have a right to be sold on their platforms. However, the right to publish and sell books does not necessarily imply a right to be boosted to the top of search results, especially results for generic terms. Online booksellers could take steps to intervene in search rankings on key terms like “vaccines”, such as through pushing books which clearly promote conspiracy theories and disinformation (like Mercola’s books) to the bottom of the results page.

Ultimately, online booksellers – like social media companies – trade in ideas and information, and use algorithms to help them do it more effectively. They profit directly from the sale of books, including those which promote factually wrong and potentially harmful beliefs to their customers, and funnel money to the authors of these books, rewarding and incentivising the creation of this literature. With this in mind, as we demand accountability from social media platforms for the spread of disinformation, we should also be looking to hold online booksellers accountable for their role in doing the same.

*Research for this article was conducted on 5 October 2021.

Elise Thomas is an OSINT Analyst at ISD. She has previously worked for the Australian Strategic Policy Institute, and has written for Foreign Policy, The Daily Beast, Wired and others.